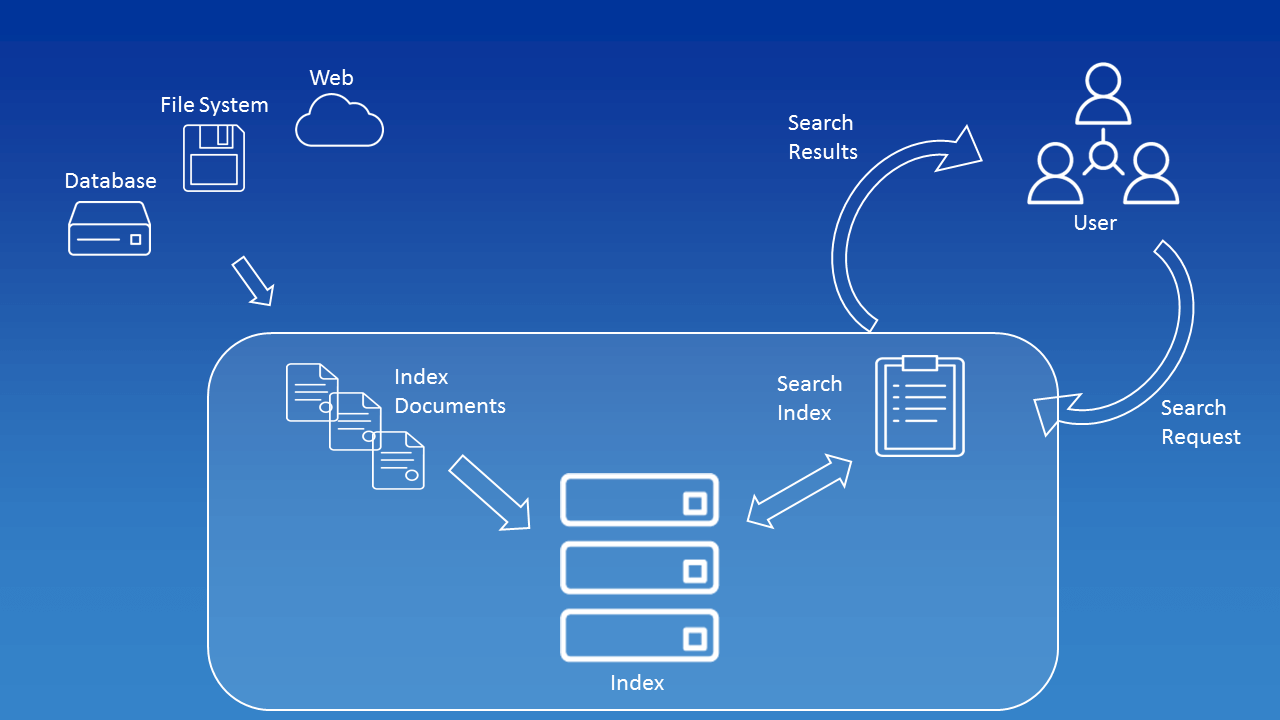

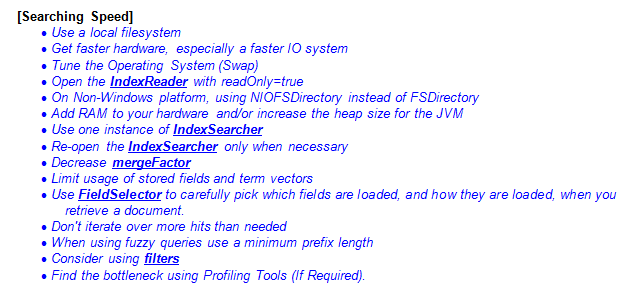

Consider the following example of creating a classic index:Īs shown in the diagram, this is what happens in Lucene: This is a high-standard algorithm which makes the search very easy. In an Inverted index, for every word in all the documents, we store what document and position this word/term can be found at. In a classic index, for every document, we collect the full list of words or terms the document contains. But instead of creating a classic index, Lucene makes use of Inverted Indices. The obvious question which should come to your mind is, how is Lucene so fast in running full-text search queries? The answer to this, of course, is with the help of indices it creates. This is because the information we need might exist in a single file out of billions of files kept on the web. Running the full-text search on this kind of volume of data is a difficult task. The velocity at which data is being stored in an application today is huge. Even if it did, it will take an unacceptable amount of time to run the search this big.Ī full-text search engine is capable of running a search query on millions of files at once. But what about the Web or a Music application or a code repository or a combination of all of these? The database cannot store this data in its columns. Databases like MySQL support full-text search. One might think that a simple relational database can also support searching. This search can be across multiple web-pages which exist on the Web or a Music application or a code repository or a combination of all of these. Search is one of the most common operations we perform multiple times a day. Before we start with an application which demonstrates the working of Apache Lucene, we will understand how Lucene works and many of its components. Lucene is one of the most powerful engine on which Elasticsearch is built up on. With Apache Lucene, we can use the APIs it exposes in many programming languages and builds the features we need. Please make sure to update tests as appropriate.In this lesson, we will understand the workings behind one of the most powerful full-text search engine, Apache Lucene. For major changes, please open an issue first to discuss what you would like to change.

Combining the two concepts, we should also get results for "masinile" etc. Transliteration means that if we search for "mașini", we should also get results containing "masini". Stemming means plurals will also be retrieved if we search for the singular and also other words derived from the base word. If we index those files and search for "apple", we should receive information about which documents contain information about apples. Suppose we have multiple documentes containing information about apples. Lucene uses transliteration and stemming.

The indexes are stored in a separate folder, then queries are built and then execute. The app works by using the Lucene Analyzers and Indexer classes.įiles are parsed using Tika and then indexed. Java based document search engine using Apache LuceneĮxample application which showcases how Apache Lucene can be used in a Java application for indexing and searching documents for keywords.Īpache Tika was used in order to parse the content of multiple file types, including PDF, DOCX etc Usage

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed